Using GHDL for interactive simulation under Linux

The opensource and free VHDL simulator 'GHDL' has been out for many years, but like many other opensource tools, it has caught limited attention from the industry. I can hear you thinking: 'If it doesn't cost money, it can't be worth it'. Well, I hope this short overview will change your mind and even whet your appetite for more. Because, using some extensions, you can do some quite funky stuff with it that will save you a lot of debugging work. For example, simulate your real world software with a virtual FPGA device. This can be beneficial to:

- The VHDL programmer having to interface his design with firmware routines

- The C programmer having to verify his code down to the hardware

- Any student to play with VHDL at home at no cost

Be warned however: This article is kind of "Linux only". Although there is a GHDL version for Windows, I have no clue how well the presented solution works under other operating systems. Why Linux at all? It has turned out over all those years, that it is just the easiest environment for all sorts of development - for me at least. The Xilinx toolchain for example was found to run much faster under Linux than Windows (for unknown reasons).

GHDL overview

Ok, so what is GHDL really? It's been somewhere within my attention horizon for years, but I never seriously used it so far - until a few weeks ago. This doesn't make me an expert yet, but that would actually speak for GHDL. Considering myself old fashioned when it comes to development of reliable designs, I hardly tend to migrate. Well, fact is, after giving OpenSource a try in the VHDL world as well, that an increasing number of my projects are now GHDL-powered.

But let's get to the point and look at GHDLs most important attributes:

- It is written in Ada (we remember darkly, VHDL is somewhat based on Ada) and compiled with the GCC toolchain

- It is command line based, thus preferrably Makefile driven (using the Gnu make tool)

- You can choose from various IEEE standard deviations (we'll touch this later)

You might have been repelled by the fact that there is very sparse documentation available about GHDL and you have to do the time consuming scattered-post-picking from all the mailing lists. Let us just try to change that - a little.

You also might have been aware that GHDL is 'just' a plain simulator that can output into wave files. The actual wave display is a seperate thing, but covered up nicely by the gtkwave application. You simply run your simulation executable with some output options, (re-)load the wave file within gtkwave and you're set with a fast and convenient wave display.

If you're a Debian guy or gal, you can just

apt-get install ghdl gtkwave

..or use your favorite package manager. For all other distributions or systems, check the official GHDL website http://ghdl.free.fr/.

GHDL to go

There are plenty of tutorials around, but we'll start with a very simple standalone example anyhow, just to see how it works. Download simple.vhdl via this link and guess how it works: simple.vhdl

No, let's just simulate it. You do have GHDL installed by now, do you?

Instead of having to write a TCL script and a project file like you'd be used to for Isim, GHDL is much simpler:

<code>ghdl -i simple.vhdl # Import our above example into the current 'work' cache ghdl -m simple # Analyze, elaborate and build an exe from the top level entity 'simple' ./simple --wave=test.ghw # Run the simulation and output a wave file</code>

Now you should have a test.ghw wavefile in the current directory. Just look at it using the gtkwave command:

gtkwave test.ghw

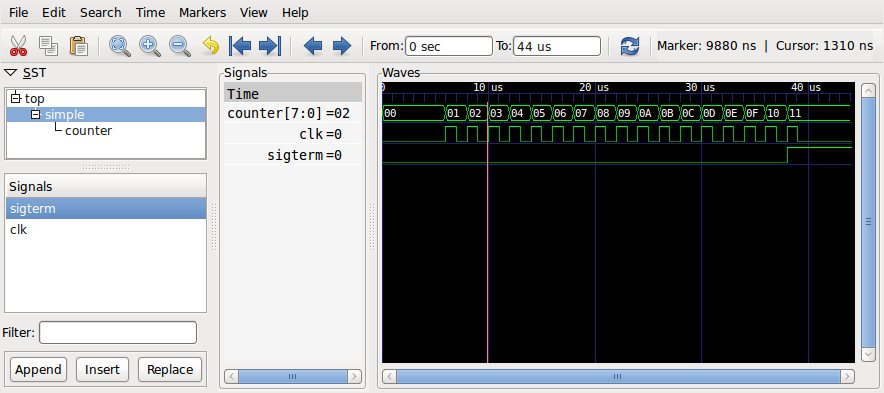

and - oops, why don't I see a waveform? Well, you have to first select the desired signals from the tree view in the upper left pane (saying SST). Unlike isim, ghdl stores just all signals in the wave file, but compresses them well. You can save your current view (by ->) so you don't have to browse through your signals again. Next time, just append the name of your view file, like:

<code>gtkwave test.ghw view.sav</code>

Figure 1. Simple example simulation displayed in GTKwave

(Non-) Standards

You may have been told by a number of VHDL experts to stay away from the std_logic_arith library packages. Believe me, I always keep forgetting the gory details why, but let's just agree on they are "dirty" and read about all the details elsewhere. However, Xilinx for example uses those dirty references all over the place, so we need to rely on it if we want to simulate their primitives.

But let's emphasize one thing first: GHDL has found to be very picky about badly written VHDL, on the other hand, many vendor specific libraries have been lazily coded. Anyhow, there are various options to make GHDL accept your or other people's code using various flags like --std, or the --ieee options. These are in fact quite well documented.

When migrating from your code that is for example verified to work using Isim, you may still have to do some adaptations to GHDLs strict standard interpretation, depending on how 'clean' your code is. That sounds like another reason to be sceptic about GHDL, but then again, it became pretty darn good at pointing out errors over all these years, so you should have no problem boiling down the error report to a few warnings and end up with sane VHDL code. This is one thing I consider most valuable about GHDL. Really, I expect the tools to teach me the standard, not 1000 page reference manuals.

Vendor specific simulation libraries

So you are ready to tackle a larger project and you have a bit of time, right? Assume, you're using a Xilinx FPGA and some of the built in hard instances, like a DCM (digital clock manager) module. Before we just rephrase what we learnt from another source, we'll simply link it to this very useful paper describing in short words how Unisim components can be used in GHDL. Likewise, this works for the XilinxCoreLib, however, some cores turned out to behave problematic. Anyhow, here it is:

http://www.dossmatik.de/ghdl/ghdl_unisim_eng.pdf

Simulation aspects

Assuming you have verified your simulations for many years using various simulators, you'll probably nod at the following development process:

- Simulate/verify your low level entity under various scenarios with a simple test bench

- Insert verified components into your higher level architecture

- Simulate the parent level, find errors, possibly realize your test bench in (1) was not covering all scenarios

- Repeat from (1) and refine

- Your software developer colleague finds more errors in the real world usage that don't turn up in the simulation. Repeat your entire debugging procedure and find the missing scenario

So the obvious question is: How can this simulation technique be improved? Instead of shoveling many static test vectors and data files into our simulation, couldn't we just make our projected software speak directly to the simulation?

If we design a certain framework right, chances are high that we end up with a solution that works in the simulation as well as under real conditions. Of course it will run much slower in the simulation, but so what, we can run the entire test bench over night.

But: We will have to extend GHDL.

GHDL extensions

The first question for the OpenSource linux hacker might be, after doing the first steps with a program and liking it: How can it be extended with own code? Then the next thought might be: But I don't want to touch a framework in a language I'm not firm in (ok, Ada is similar to VHDL, but we got used to program hardware with it, not software). No problem! It turns out, since it's all GCC, that there is some more or less convenient calling convention, so we can - in theory - easily extend the generated simulator code with own library routines. There is an existing interface specification called VPI that allows to access VHDL structures using a defined API. It actually origins from the Verilog standard, but was implented for VHDL as well. This is nothing new, you will find out that quite a few commercial simulators have this interface. Specifically for VHDL, there is a VHPI interface that allows calling external functions. We're gonna focus on te latter.

The VHPI interface turns out to be quite simple: You can pretty much directly call a C function from a VHDL program. However, the interface and calling conventions are just not too well documented, as they might be subject to change (This is what the GHDL documentation tells us).

So note that we are proceeding into a kind of hackish area: Noone will guarantee, that our extensions work in 10 years without change. But then again, would you get that stability with commercial tools? I don't think so. So let's proceed, we want to see a solution until dawn.

One resource that has boosted this development a lot, is Yann Guidon's collection of extensions at http://ygdes.com/GHDL/. Among other nice solutions, he demonstrates how a simulation can be run in real time, how data can be read from the parallel port or how graphical data can be displayed on a linux frame buffer.

Let's start of with the most basic example to demonstrate the external C routine calling from GHDL:

A simple interface

Let us take Yann's "bouton" (Push button) example. It can be found at the above listed web site in the GHDL/io_port folder of his GHDL extensions .tgz package. It polls the pins from a parallel port device under linux. We'll not elaborate on the hardware access functionality, just look at how GHDL calls C routines. For that, you'd define a function prototype in VHDL, but with some special attributes as follows:

<code>package boutons is procedure lecture_boutons (bouton1, bouton2, bouton3, bouton4: out std_ulogic); attribute foreign of lecture_boutons : procedure is "VHPIDIRECT lecture_boutons"; end boutons; </code>

We are not done yet, this is just the prototype. We'll also need some kind of function stub to actually wrap our code. In the same file, you'll find a package body:

<code>package body boutons is procedure lecture_boutons (bouton1, bouton2, bouton3, bouton4: out std_ulogic) is begin assert false report "VHPI" severity failure; end lecture_boutons; end boutons; </code>

That is the VHDL side. The C side is much shorter, here's the prototype for our button read function:

<code>void lecture_boutons(char boutons[4]);</code>

So you can see that the above buttons map into a char array. But what's contained in these chars? Remember, a std_logic_vector is not just an array of bits that are high or low. There can be a few more states like 'X', 'U', 'Z'. Therefore, a VHDL std_logic entity is one char wide. The encoding is listed in the ghpi.h file of our GHDL extensions.

Now, after we have written all the functionality, how do we bake our simulation?

It actually makes sense to collect all these C extensions in a library and link them, like you've possibly done that in GCC many times. So we'll first create a library with all the extensions:

ar ruv libmysim.a boutons.o my_other_extensions.o

Then, we'll translate the simulation VHDL a little different than in the above example. First, we analyze all the files, then we explicitely elaborate and link against the simulation with the ghdl -e command, but specifying link options:

<code>ghdl -a simbutton.vhdl boutons.vhdl # Analzye ghdl -e -Wl,-L. -Wl,-lmysim simbutton # Elaborate and link</code>

'simbutton' is the top level entity of your simulation again. You'd probably want to put these commands into a Makefile.

We have found so far, using Yanns examples, that we can call stuff from the GHDL simulation repeatedly, but we can not easily make a C program control the entire simulation in the sense of being a 'master'. But rethinking this wish, makes us probably realize that in real world we don't have this situation: If a front end is speaking to an FPGA device, it is happening mostly the asynchrononus way through some command interface, for example a USB FIFO. Why don't we just mimic this?

Using a pipe

The simplest FIFO implementation we can think of, is the one that we don't have to code ourselves. It is generously offered by any POSIX compliant system. Even Windows would offer Pipes, but due to the unified filesystem nature of things in Unix like systems, things work nicer in Linux -- creating a FIFO is just a matter of the following command:

mknod sim_fromuser p

or even easier:

mkfifo sim_fromuser

This simply creates a file in your current working directory named sim_fromuser. What you write into this file, is read again from the other side. For both communication channels, we likewise need another FIFO called sim_touser.

So our short coding TODO list is:

- Write polling interface to pipe in C that just reads/writes to files

- Write a little VHPI interface specification

- Code a clock sensitive process in your test bench that checks for available data from the pipe and reads it, eventually. Likewise for the reverse data flow direction.

So let's start extensing our mysim library by another package called ghpi_pipe. We've used the prefix ghpi because this is all a little GHDL specific and not according to the VHPI specifications. Before we bore you with another program listing, you might be inclined to download our GHDL extensions.

However, let's list the VHDL function prototypes:

<code>function openpipe(name: string) return pipehandle_t;-- Open pipe, return handle procedure closepipe(handle: pipehandle_t); -- Close pipe procedure pipe_in(handle: pipehandle_t; data: out unsigned(7 downto 0); flags : inout pipeflag_t); -- Pipe input channel handler procedure pipe_out(handle: pipehandle_t; data: in unsigned(7 downto 0); flags : inout pipeflag_t); -- Pipe output handler </code>

As you can see, we have defined some handle and flag types. Makes coding easier, once you change things, so it makes sense to use those type definitions.

Since we'd want to have the least possible function calling overhead, we've packed all the I/O functionality in one pipe_in respectively pipe_out function. Depending on the flags passed, those functions check whether data is available/can be written or read/write. The API is documented in detail in the doc/ subfolder of our GHDL extension package. Normally, you'd call this function from a clock sensitive process and use a global signal to save the status flags. But here we'd come to the point where we say: Read the source, Luke. Or compile again, Sam.

There's more to it: using the same framework, you could also make use of named sockets. Remember: It's just a file! However, as soon as it comes to networking, there are cleaner and nicer ways to do it, and here we lead over to the drawbacks of pipes:

- They give little control over proper FIFO behaviour (like a hardware FIFO would have)

- They are very OS specific (no easy go under native Windows)

- They could be slow

- They're not bidirectional, each communication direction needs a separate pipe

- They're bytewise oriented by default

Workarounds are possible, but there are various reasons why we shouldn't bother and move on to a FIFO solution. For now though, we will end here and postpone the FIFO talk to a possible next article...

References

Here's a list of references:

- GHDL homepage: http://ghdl.free.fr/

- René Doss: A useful primer on simulating vendor specific libraries like Unisim with GHDL: http://www.dossmatik.de/ghdl/ghdl_unisim_eng.pdf

- Yann Guidon's GHDL extensions: http://ygdes.com/GHDL/

- Our GHDL extensions: https://github.com/hackfin/ghdlex

- Comments

- Write a Comment Select to add a comment

To post reply to a comment, click on the 'reply' button attached to each comment. To post a new comment (not a reply to a comment) check out the 'Write a Comment' tab at the top of the comments.

Please login (on the right) if you already have an account on this platform.

Otherwise, please use this form to register (free) an join one of the largest online community for Electrical/Embedded/DSP/FPGA/ML engineers: