Introducing the VPCIe framework

Introduction

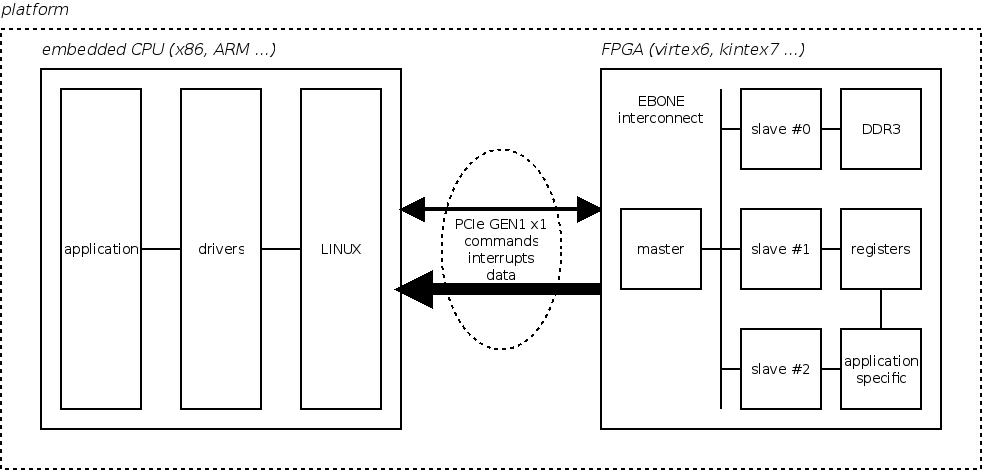

My daily work involves platforms featuring an embedded CPU communcating with a FPGA device over a PCI Express link (PCIe for short). The main purpose of this link is for the CPU to convey configuration, control, and status commands to hardware slaves implemented in the FPGA. For data intensive applications (2D XRay detector readout backend), this link can also be used as a DMA channel to transfer data from the FPGA to the CPU memory. Finally, a slave can interrupt the CPU using the PCIe MSI mechanism. The following figure summarizes a typical platform architecture:

As a software engineer, I am mostly in charge of implementing the LINUX applications and drivers to interface the CPU with the FPGA. I am also involved in the implementation of the DMA engine and associated logic, both written in VHDL.

VHDL functionnal simulation is an invaluable tool as interactions between the different hardware components become more complex. It helps validating the implementation at the signal granularity under different situations and scenario that would be difficult to reproduce otherwise. Since it helps finding errors without having to setup the FPGA device, development time is largely reduced.

However, this simulation process does not involve the other part of the platform, ie. the embedded CPU running LINUX and the corresponding software. A few months ago, I started the implementation of a framework allowing both the CPU, its software and the device VHDL code to be simulated as a whole. Since it largely focuses on virtualizing PCIe, I called this project VPCIe (short for virtual PCIe). It is an open source project hosted at github:

https://github.com/texane/vpcie

The repository contains install instructions and examples. Also, slides from a previous presentation are available. The following section presents the framework implementation.

Framework architecture

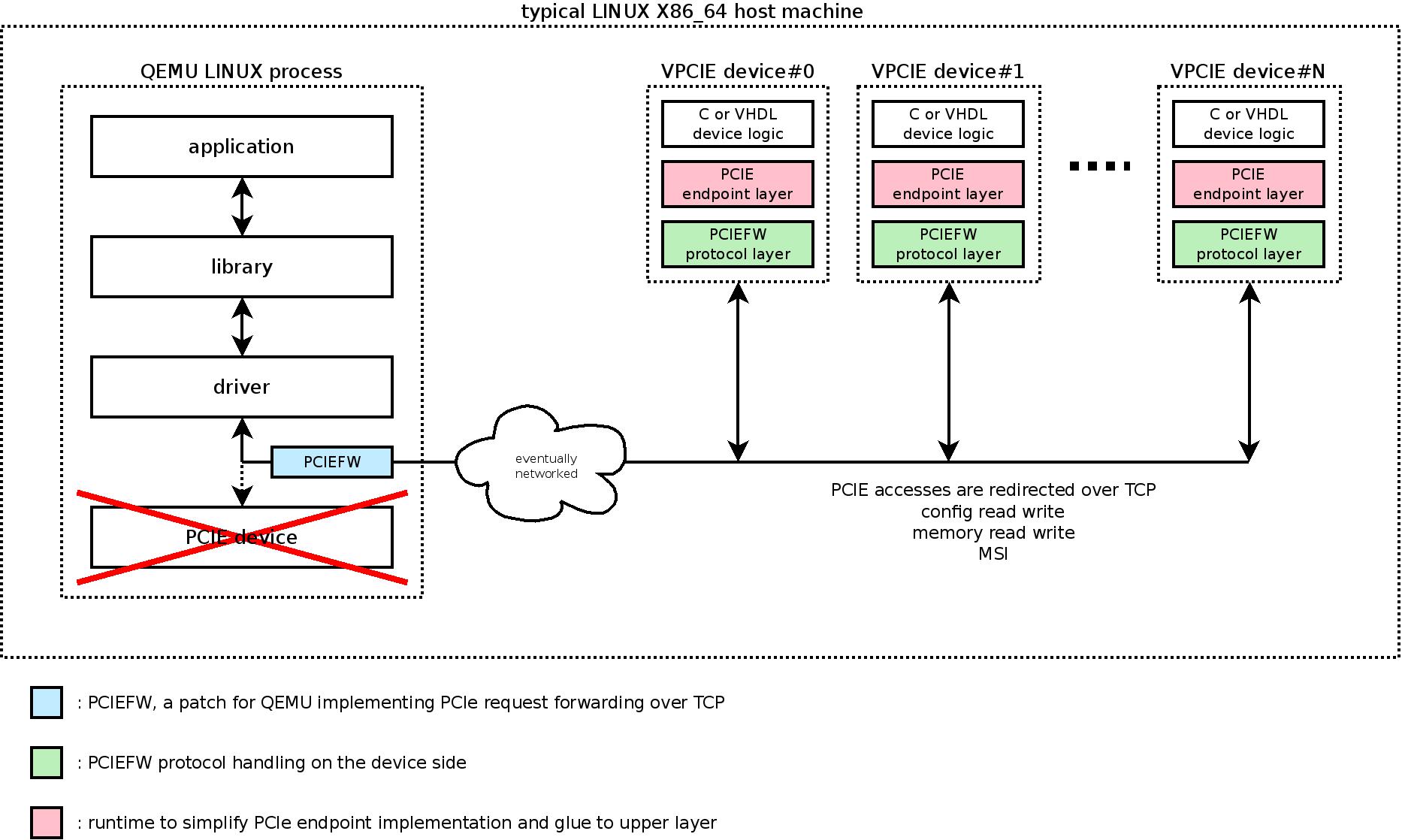

Simply put, VPCIe virtualizes the PCIe physical link for both the embedded CPU and the VHDL code. That is, it catches PCIe accesses made to / from the embedded CPU and redirects them to / from a process running the VHDL functionnal simulation.

There are several ways to catch PCIe accesses. VPCIe uses the following approach: the embedded CPU is emulated inside a QEMU (http://www.qemu.org) virtual machine, instrumented so that PCIe accesses can be trapped. At the VHDL side, PCIe accesses are explicitly done through a specially crafted entity, replacing the usual XILINX IP. The VHDL code is compiled using GHDL (http://home.gna.org/ghdl/) and linked against the VPCIe runtime, which knows how to communicate with the virtual machine.

The following picture shows the VPCIe architecture:

With this approach, the main advantage is that the CPU software runs without any modification, including LINUX and the drivers. It could even run Windows, but I never tried it.

Also, QEMU is a very flexible emulator. Especially, platform hardware resources such as CPU, memory and peripheral devices can easily be adapted to the application requirements.

Communication between the CPU virtual machine and the VHDL simulation is done using one or more TCP connection. It means that there can be multiple concurrent VHDL simulations (hence multiple PCIe devices), and that they can run on physically distant machines.

At the VHDL side, a special entity must be used that replaces the XILINX PCIe IP. This is a shortcoming, and nothing prevents to implement a fully XILINX compliant VHDL entity.

Conclusion

It was a short introduction to the VPCIe framework. The project and its implementation are still young, but stable enough for relevant simulations. If you find it interesting, please let me know so that I will post other articles. Especially, how to set up a platform and implement a PCIe based device in VHDL. Also, it is an opensource project and contributions are welcome.

- Comments

- Write a Comment Select to add a comment

Will follow closely on github, and will try to help ASAP. Let's see if I can catch up.

Thanks for your comments. Actually, VPCIe may help you, please keep me informed of your progress.

PCIe is really powerful as it provides both low latency accesses, data integrity and ordering, control flow, bandwidth scaling ... However, it can be quite difficult to use, even if the XILINX provided IP largely simplifies the developer life. To make things easier, we developed a PCIe centric interconnect. It is not publicly available for now, but if you think it can help you, please drop me an email.

http://www.ohwr.org/projects/e-bone

To post reply to a comment, click on the 'reply' button attached to each comment. To post a new comment (not a reply to a comment) check out the 'Write a Comment' tab at the top of the comments.

Please login (on the right) if you already have an account on this platform.

Otherwise, please use this form to register (free) an join one of the largest online community for Electrical/Embedded/DSP/FPGA/ML engineers: